已更新至1.103.5版,目前仅安装了字幕生成语音的功能,用豆包查了一下日志,提示是长度超限的问题“ 从你提供的日志中,可明确文本转语音(TTS)服务运行失败 ,核心问题是 “模型处理长度超限” 和 “衍生的文件不存在”,节选日志如下:

2025-08-31 09:44:19.280 [info] ![]() 准备拆分文本

准备拆分文本

2025-08-31 09:44:29.944 [info] ![]() 准备进行文本生成

准备进行文本生成

2025-08-31 09:44:30.292 [info] ![]()

![]() 初始化:准备启动

初始化:准备启动

2025-08-31 09:45:40.130 [info] INFO: Started server process [11956]

INFO: Waiting for application startup.

2025-08-31 09:45:40.133 [info] INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:9900 (Press CTRL+C to quit)

2025-08-31 09:45:40.145 [info] ![]() 准备生成: 好的,各位,欢迎来到2016年9月ICT月度导师计划的第一个教学课程。

准备生成: 好的,各位,欢迎来到2016年9月ICT月度导师计划的第一个教学课程。

2025-08-31 09:45:40.147 [info] ![]() 存在模型

存在模型

2025-08-31 09:45:40.178 [info] INFO: 127.0.0.1:4696 - “POST /tts/IndexTTS/loadModel HTTP/1.1” 200 OK

2025-08-31 09:45:58.251 [info] 2025-08-31 09:45:58,250 WETEXT INFO building fst for zh_normalizer …

2025-08-31 09:46:57.601 [info] 2025-08-31 09:46:57,599 WETEXT INFO done

2025-08-31 09:46:57,599 WETEXT INFO fst path: c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\utils\tagger_cache\zh_tn_tagger.fst

2025-08-31 09:46:57,599 WETEXT INFO c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\utils\tagger_cache\zh_tn_verbalizer.fst

2025-08-31 09:46:57.736 [info] 2025-08-31 09:46:57,735 WETEXT INFO found existing fst: c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\tn\en_tn_tagger.fst

2025-08-31 09:46:57,735 WETEXT INFO c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\tn\en_tn_verbalizer.fst

2025-08-31 09:46:57,735 WETEXT INFO skip building fst for en_normalizer …

2025-08-31 09:47:04.864 [info] >> Be patient, it may take a while to run in CPU mode.

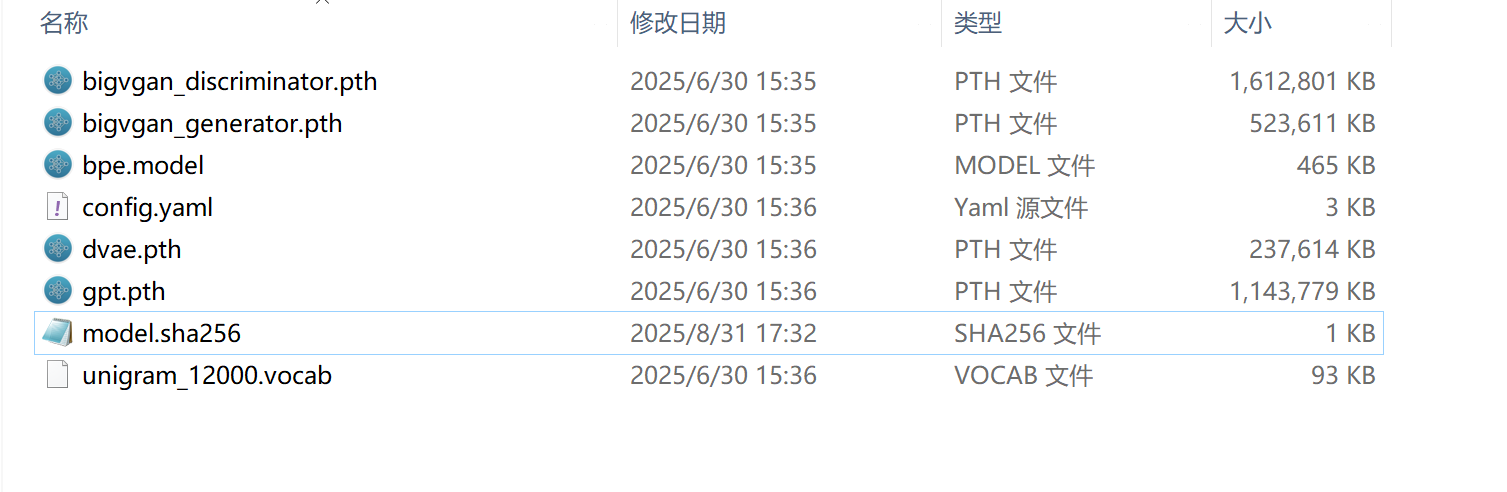

GPT weights restored from: c:/Documents/shenghuabi/config/pythonAddon/model\gpt.pth

Removing weight norm…

bigvgan weights restored from: c:/Documents/shenghuabi/config/pythonAddon/model\bigvgan_generator.pth

TextNormalizer loaded

bpe model loaded from: c:/Documents/shenghuabi/config/pythonAddon/model\bpe.model

start inference…

INFO: 127.0.0.1:4697 - “POST /tts/IndexTTS/text2speech HTTP/1.1” 500 Internal Server Error

2025-08-31 09:47:04.869 [info] ![]() 准备生成: 这是八个课程中的第一个。每个月你都会收到八个单独的教学视频,这些课程紧扣当月主题,并相互呼应,让整体更为完整。

准备生成: 这是八个课程中的第一个。每个月你都会收到八个单独的教学视频,这些课程紧扣当月主题,并相互呼应,让整体更为完整。

2025-08-31 09:47:04.870 [info] ![]() 存在模型

存在模型

2025-08-31 09:47:05.412 [info] ERROR: Exception in ASGI application

Traceback (most recent call last):

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\uvicorn\protocols\http\h11_impl.py”, line 403, in run_asgi

result = await app( # type: ignore[func-returns-value]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\uvicorn\middleware\proxy_headers.py”, line 60, in call

return await self.app(scope, receive, send)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\fastapi\applications.py”, line 1054, in call

await super().call(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\applications.py”, line 112, in call

await self.middleware_stack(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\middleware\errors.py”, line 187, in call

raise exc

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\middleware\errors.py”, line 165, in call

await self.app(scope, receive, _send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\middleware\exceptions.py”, line 62, in call

await wrap_app_handling_exceptions(self.app, conn)(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette_exception_handler.py”, line 53, in wrapped_app

raise exc

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette_exception_handler.py”, line 42, in wrapped_app

await app(scope, receive, sender)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\routing.py”, line 714, in call

await self.middleware_stack(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\routing.py”, line 734, in app

await route.handle(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\routing.py”, line 288, in handle

await self.app(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\routing.py”, line 76, in app

await wrap_app_handling_exceptions(app, request)(scope, receive, send)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette_exception_handler.py”, line 53, in wrapped_app

raise exc

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette_exception_handler.py”, line 42, in wrapped_app

await app(scope, receive, sender)

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\starlette\routing.py”, line 73, in app

response = await f(request)

^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\fastapi\routing.py”, line 301, in app

raw_response = await run_endpoint_function(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\fastapi\routing.py”, line 212, in run_endpoint_function

return await dependant.call(**values)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib\main.py”, line 43, in text2speech

await indexTTS.text2Speech(item.audioPath, item.text, item.options.sentence[‘max_text_tokens_per_sentence’], item.options.generation.model_dump(), item.output)

File “c:\Documents\shenghuabi\config\pythonAddon\lib\tts\indextts.py”, line 24, in text2Speech

result = self.tts.infer(

^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\infer.py”, line 573, in infer

codes = self.gpt.inference_speech(auto_conditioning, text_tokens,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\model.py”, line 670, in inference_speech

conds_latent = self.get_conditioning(speech_conditioning_mel, cond_mel_lengths)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\model.py”, line 497, in get_conditioning

speech_conditioning_input, mask = self.conditioning_encoder(speech_conditioning_input.transpose(1, 2),

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1751, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1762, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\conformer_encoder.py”, line 426, in forward

xs, pos_emb, masks = self.embed(xs, masks)

^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1751, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1762, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\conformer\subsampling.py”, line 185, in forward

x, pos_emb = self.pos_enc(x, offset)

^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1751, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\torch\nn\modules\module.py”, line 1762, in _call_impl

return forward_call(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\conformer\embedding.py”, line 140, in forward

pos_emb = self.position_encoding(offset, x.size(1), False)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File “c:\Documents\shenghuabi\config\pythonAddon\lib.venv\Lib\site-packages\indextts\gpt\conformer\embedding.py”, line 97, in position_encoding

assert offset + size < self.max_len

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

AssertionError

…

…

2025-08-31 10:02:16.770 [error] [Error: ENOENT: no such file or directory, open ‘c:\Documents\shenghuabi\config\pythonAddon\chunk\65a9013f-f8a8-5592-b37e-99e6dd595fc4.wav’] {

errno: -4058,

code: ‘ENOENT’,

syscall: ‘open’,

path: ‘c:\Documents\shenghuabi\config\pythonAddon\chunk\65a9013f-f8a8-5592-b37e-99e6dd595fc4.wav’

}